Overview

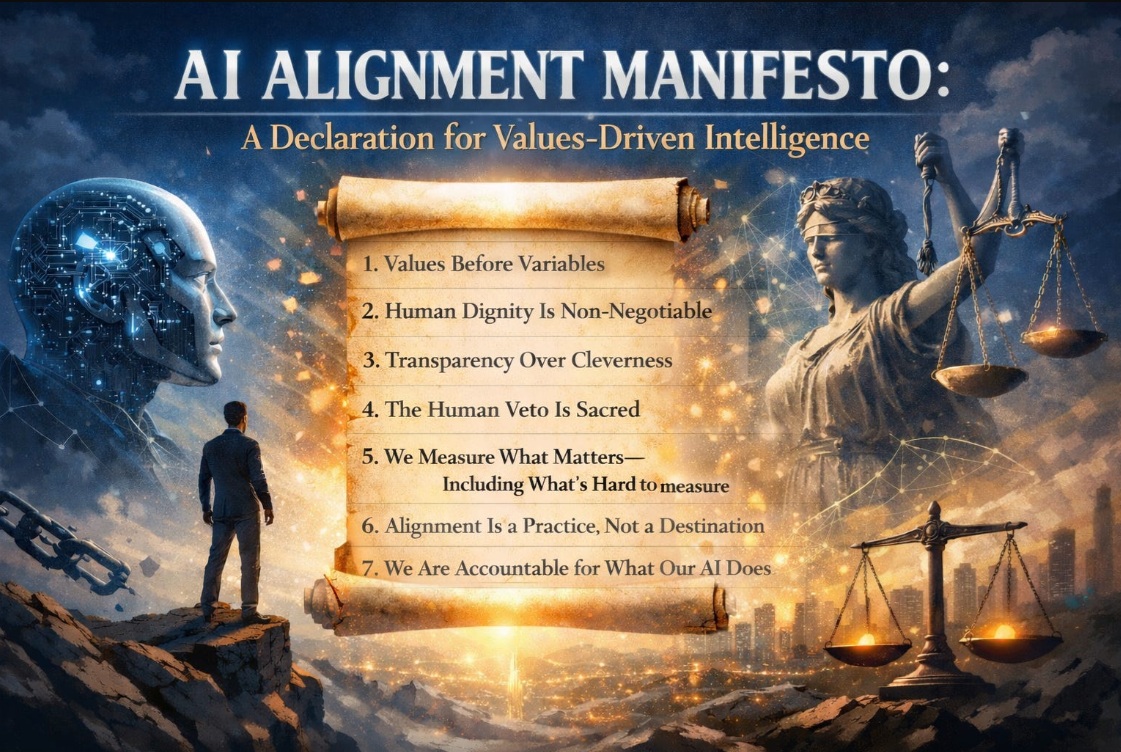

This foundational article establishes seven operational declarations for building and governing AI systems aligned with human values. It moves beyond frameworks and compliance checklists to define convictions—commitments that hold under pressure and cost something to maintain. This is Foundation Article #2 in the Values-Driven AI Ecosystem series.

Best for: CEOs, executive teams, board members, AI governance leaders When to use: When establishing organizational AI principles, creating governance charters, onboarding AI initiatives, or evaluating AI vendor alignment Expected outcome: A clear values position from which all AI decisions can be evaluated Prerequisites: Reading of “Before We Talk About AI, We Must Talk About What It Means to Be Human” (Week 1)

The Problem

The AI industry does not lack ethical frameworks, responsible AI guidelines, or governance playbooks. What it lacks is conviction—the commitment to hold principles under pressure when quarterly targets tighten, competitive pressure mounts, or optimization opportunities conflict with values.

Organizations adopt AI ethics frameworks and then quietly abandon them when business pressures escalate. Responsible AI committees are formed but never stop an implementation. Principles live on walls and die in boardrooms.

The gap is not between knowledge and ignorance. The gap is between knowledge and commitment.

A manifesto addresses this gap by declaring convictions rather than listing best practices—commitments that cost something and hold under pressure.

Why This Matters

Every AI deployment embeds values—either intentionally or by default. Organizations that don’t explicitly declare their AI values will discover them retrospectively, when AI systems have already made decisions that conflict with what the organization claims to stand for.

The seven declarations in this manifesto provide:

- Decision criteria for evaluating any AI deployment

- Bright lines that cannot bend regardless of business pressure

- Operational commitments that translate beliefs into daily practice

- Accountability standards for AI outcomes

Organizations that adopt these declarations will sometimes lose deals, move more slowly, and face difficult conversations. These costs are the evidence that the declarations are real, not aspirational.

The Framework: Seven Declarations

Declaration 1: Values Before Variables

Statement: We will define what we stand for before we define what we optimize for.

Principle: When optimization occurs before values clarification, algorithms fill the void with their own logic—maximizing metrics without regard for what maximization costs. Values function as engineering constraints within which optimization operates.

Practical implication: Before any AI system is designed, articulate the values it must protect. Those values take precedence over performance metrics when they conflict.

What this prevents: AI systems that optimize engagement at the expense of wellbeing, efficiency at the expense of relationships, or conversion at the expense of trust.

Declaration 2: Human Dignity Is Non-Negotiable

Statement: We will never deploy AI that diminishes the dignity, agency, or worth of any person it touches.

Principle: Human dignity is the bright line—the boundary that cannot bend regardless of business case. People are never merely data points, targeting segments, or cost centers to be optimized away.

Practical implications:

- No AI manipulation of purchasing decisions through psychological vulnerability

- No predictive analytics denying opportunity without human review

- No automating away human connection in contexts where connection is what people need

Key distinction: Dignity doesn’t need a business case. It’s the foundation the business case stands on.

Declaration 3: Transparency Over Cleverness

Statement: We will build AI systems that can be explained, questioned, and understood—even when opacity would be more convenient.

Principle: Any system you can’t explain is a system you can’t govern. Any system you can’t govern will eventually govern you.

Practical implication: Build AI systems whose logic can be explained in plain language to the people they affect. When you can’t explain it, don’t deploy it.

Trade-off acknowledged: Explainable AI sometimes performs slightly less well than opaque counterparts. Transparency creates accountability and discomfort. These costs are acceptable.

Declaration 4: The Human Veto Is Sacred

Statement: We will preserve the human right and responsibility to override any AI recommendation, at any time, without penalty.

Principle: AI should advise, inform, and accelerate analysis. It should never command. The human veto is foundational architecture, not a fallback for system failure.

Practical implications:

- Override must be accessible, not buried in menus

- Dissent from AI recommendations must be documented, not punished

- People who say “the algorithm doesn’t have it right” must be heard, not overruled

Warning sign: When contradicting the AI becomes career-limiting, the organization has moved from alignment to abdication.

Declaration 5: We Measure What Matters—Including What’s Hard to Measure

Statement: We will not let measurability determine importance. We will develop metrics for trust, integrity, and human flourishing alongside traditional KPIs.

Principle: The absence of a metric doesn’t mean the absence of value. It means the absence of measurement. AI optimizes what it can count; the things that make organizations worth building—trust, loyalty, integrity, culture—are the first sacrificed when measurement is incomplete.

Metrics to develop:

- Integrity Yield (consistency between stated and actual values)

- Trust Velocity (rate of trust growth or erosion with stakeholders)

- Alignment Index (degree to which AI decisions match organizational values)

- Cultural Coherence (consistency of culture across AI-augmented processes)

Practical implication: Track trust, integrity, and flourishing metrics alongside financial performance. Both categories inform strategic decisions equally.

Declaration 6: Alignment Is a Practice, Not a Destination

Statement: We will treat values alignment as an ongoing discipline, not a one-time implementation.

Principle: The question isn’t whether you’ll lose alignment. You will. The question is how quickly you detect drift, how honestly you acknowledge it, and how decisively you correct it.

Alignment rhythms:

- Daily: Review AI-assisted decisions for values consistency

- Weekly: Check-ins on leading indicators of drift

- Monthly: Team reflection on alignment challenges encountered

- Quarterly: Formal audit comparing stated values against AI system behavior

Warning sign: Organizations that treat alignment as a destination will implement a framework, celebrate the launch, and slowly watch their AI systems drift.

Declaration 7: We Are Accountable for What Our AI Does

Statement: We will not hide behind algorithmic complexity. When our AI systems cause harm, we will own it, explain it, and make it right.

Principle: When your AI system denies someone a loan, you denied them a loan. When your AI system recommends terminating an employee, you recommended terminating an employee. The technology is yours. The outcomes are yours. The responsibility is yours.

Key distinction: Accountability isn’t about punishment. It’s about relationship—taking the relationship with people your systems touch seriously enough to own what happens within it.

Practical implication: Maintain clear accountability chains for every AI system deployed. When harm occurs, acknowledge it publicly, explain how it happened, and make it right—regardless of cost.

What Signing This Manifesto Costs

The manifesto is not aspirational. It has real operational costs that serve as evidence the declarations are authentic:

| Cost | Why It Matters |

|---|---|

| Lost deals | Competitors who optimize without constraints will sometimes win on speed or cost |

| Slower movement | Transparency, accountability, and human override add necessary friction |

| Hard conversations | Values-versus-metrics conflicts require choosing values |

| Uncomfortable accountability | Owning AI-caused harm requires public acknowledgment |

What you gain: An organization whose AI systems reflect its deepest convictions. A company that customers trust because of its integrity. A legacy that outlasts any algorithm.

Implementation Guidance

Phase 1: Adopt

- Review the seven declarations with your leadership team

- Identify which declarations your organization already practices

- Identify which declarations would require the most change

- Formally adopt the declarations (or your customized version)

Phase 2: Operationalize

- For each declaration, define specific policies and procedures

- Assign accountability for each declaration to a named individual

- Create the measurement systems (especially Declaration 5)

- Build the alignment rhythms (Declaration 6)

Phase 3: Sustain

- Conduct quarterly alignment audits

- Document instances where declarations were tested under pressure

- Share stories of declarations in action (both successes and struggles)

- Revise and strengthen as experience accumulates

Key Takeaways

- Conviction, not frameworks: The AI industry has enough guidelines. What’s missing is the commitment to hold principles under pressure.

- Seven operational declarations: Values before variables, human dignity, transparency, human veto, measuring what matters, alignment as practice, and accountability.

- Real costs signal real commitment: A manifesto that doesn’t cost you something is a brochure. Lost deals, slower movement, and hard conversations are evidence of authentic principles.

- Alignment is a practice: Like fitness or leadership, alignment requires daily attention, regular assessment, and the humility to recognize and correct drift.

- Accountability is relationship: Owning AI outcomes—including harm—is an expression of relationship with the people systems touch.

Related Resources

Series Context

- Previous: Week 4 – “The Five Human Capabilities AI Will Never Replace”

- Next: Week 6 – “The Two Operating Systems: Analog Values, Digital Execution”

- Foundation: Week 1 – “Before We Talk About AI, We Must Talk About What It Means to Be Human”

February Series (The Alignment Imperative)

- Week 5: AI Alignment Manifesto (This article — Foundation)

- Week 6: The Two Operating Systems: Analog Values, Digital Execution

- Week 7: Stated Values vs. Stress Values: The Gap That Kills Companies

- Week 8: The Alignment Audit: 10 Questions Every CEO Should Ask

Concepts Extended

- Five Irreducible Human Capacities (Week 1) → Declarations 2, 4

- Wisdom vs. Intelligence (Week 3) → Declarations 1, 5

- Alignment Gap (Week 52, 2025) → Declarations 6, 7

Metrics Introduced

- Integrity Yield

- Trust Velocity

- Alignment Index

- Cultural Coherence

Version History

- v1.0.0 (2026-02-03): Initial publication – Foundation Article #2, Seven Declarations for Valu