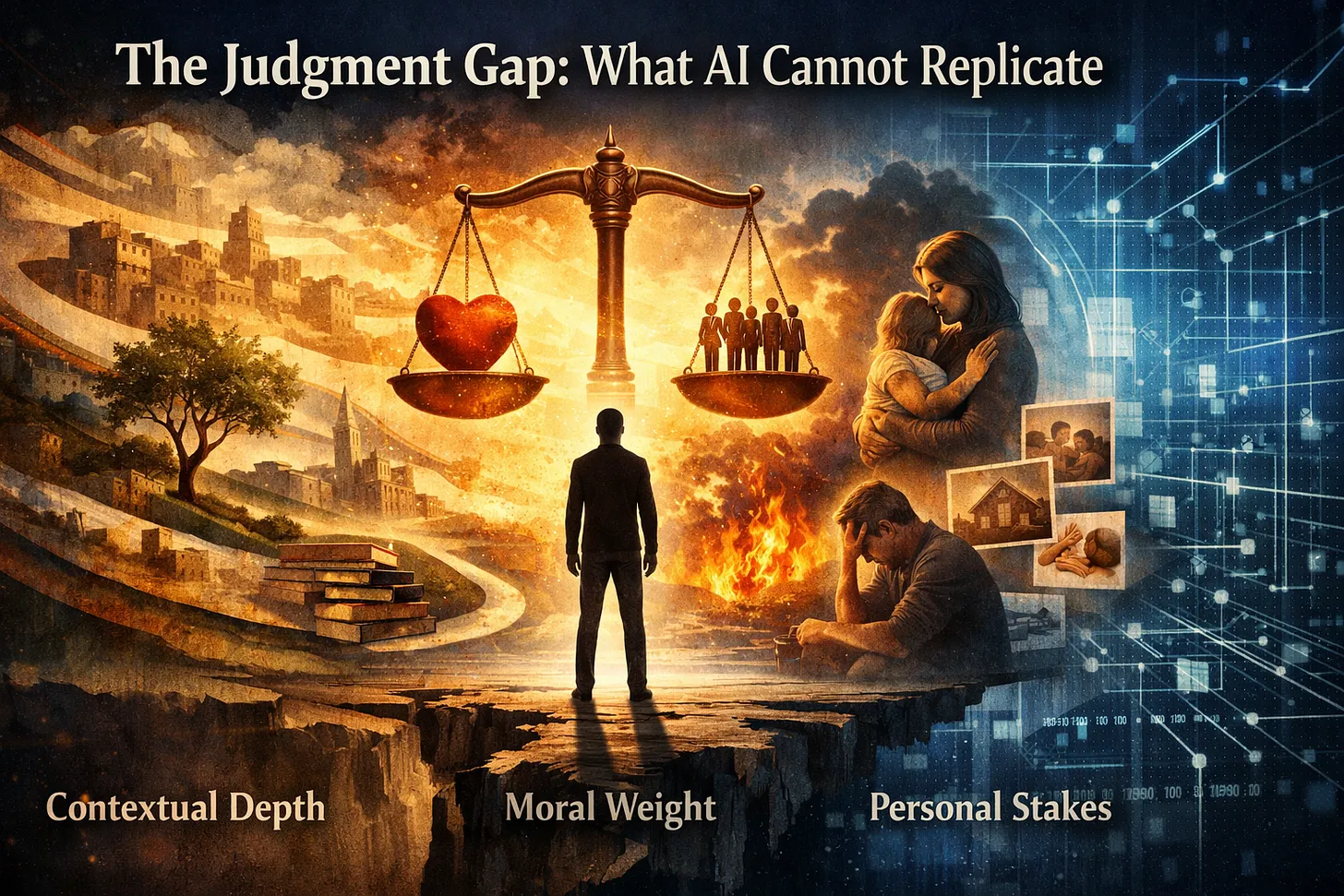

The Judgment Gap: What AI Cannot Replicate

Overview

This article examines the fundamental difference between AI’s pattern recognition capabilities and human judgment. It identifies three dimensions of judgment that AI cannot access—contextual depth, moral weight, and personal stakes—and provides a practical framework for integrating AI while preserving human decision-making authority in areas where judgment is essential.

Best for: Leaders evaluating AI deployment decisions, particularly where human relationships, ethical considerations, or contextual factors are involved.

When to use: Before implementing AI in processes that affect people, require moral consideration, or depend on contextual knowledge not captured in data.

Expected outcome: Clarity on where to deploy AI versus where to preserve human judgment, with practical questions to guide deployment decisions.

The Core Distinction: Pattern Recognition vs. Judgment

AI systems perform sophisticated pattern recognition (statistical analysis of data to generate probable outputs based on training). Human judgment answers a different question: What should I do given who I am, what I value, and what this situation requires?

This distinction matters because organizations often treat AI outputs as decisions rather than recommendations, deferring to algorithms in domains that require human judgment. The result is decision drift (gradual erosion of values-based decision-making) and trust erosion with stakeholders who sense something is “off.”

The Three Dimensions of Human Judgment

Dimension 1: Contextual Depth

Human judgment operates on everything—including what isn’t in the data. Experienced leaders bring decades of intuition, awareness of relationships and histories, sensitivity to unspoken dynamics, and memory of how similar situations resolved previously.

A manager with a 23-year supplier relationship understands factors no algorithm can access: crisis support received in 2008, weekend emergency response, partnership that transcends transactions. This contextual depth enables judgment that appears “irrational” by metrics but proves correct by outcomes.

Integration principle: AI handles data patterns; humans provide contextual knowledge. Design systems that surface AI recommendations while preserving space for human context that data cannot capture.

Dimension 2: Moral Weight

Some decisions involve right and wrong—not just efficient and inefficient. AI systems optimize objectives without experiencing the weight of morally significant choices. Humans feel this weight: they lie awake, consult conscience, imagine explaining decisions to family and future self.

Moral weight isn’t a bug in human cognition—it’s what makes ethical decision-making possible. It creates the felt sense that some things matter more than others, that certain lines should not be crossed regardless of optimization pressure.

Integration principle: Identify decisions with moral dimensions upfront. Ensure human authority over choices affecting people’s lives, fairness, dignity, or justice. AI can inform these decisions but must not make them.

Dimension 3: Personal Stakes

Judgment requires skin in the game. Human leaders bear consequences—reputation, relationships, integrity are at stake. This personal investment creates appropriate caution and required courage.

AI systems have nothing at risk. They won’t be blamed if wrong, feel shame if unethical, or wonder if they did the right thing. This is a profound limitation for decisions requiring genuine accountability.

Integration principle: Maintain clear human accountability for decisions. Don’t allow “the algorithm recommended it” to become an accountability shield. Someone with personal stakes must own each significant decision.

Where the Judgment Gap Creates Danger

The gap becomes dangerous when organizations fail to recognize it:

Hiring decisions: AI screening optimizes measurable predictors but cannot assess character, cultural contribution, or qualities that make someone a great colleague versus competent performer.

Customer relationships: AI optimizes response time and satisfaction scores but cannot judge when situations require exceptions, going off-script, or simply listening to someone who needs to be heard.

Strategic planning: AI analyzes market data but cannot weigh organizational culture, leadership capacity, or stakeholder relationships that determine execution success.

Ethical decisions: AI has no conscience and will optimize any objective without regard for fairness or dignity unless explicitly programmed. Human judgment is inescapable—either thoughtfully upfront or scrambling after damage.

The Integration Framework

The solution is integration—AI enhances human judgment rather than replacing it:

- AI handles pattern recognition: Let machines process data, identify correlations, generate options, flag anomalies. Use AI to expand information available for judgment.

- Humans retain judgment authority: Decisions requiring contextual depth, moral weight, or personal stakes remain with humans. Don’t delegate judgment to systems incapable of exercising it.

- Design for augmentation: The algorithm recommends; the human decides. The system presents options; the person chooses. The AI flags concerns; the leader responds.

- Preserve reflection time: Judgment requires thinking, thinking requires time. Build in pauses, reviews, cooling-off periods. Speed optimization that eliminates reflection eliminates judgment.

The Five Judgment Questions

Before any AI deployment affecting people, relationships, or ethics, ask:

- What contextual knowledge is this system unable to access? Relationships, histories, dynamics, situational factors not in data.

- What moral considerations are involved? Where right and wrong—not just efficient and inefficient—are at stake.

- Who will bear the consequences? Trace impact to real people whose lives will be affected.

- What happens when the algorithm is wrong? Failure modes and responsibility for addressing them.

- Where must humans retain veto power? Decisions that should never be made by algorithm alone, regardless of efficiency costs.

Key Takeaways

- Pattern recognition ≠ judgment: AI identifies what is similar to training data; judgment determines what should be done given values and context.

- Three irreplaceable dimensions: Contextual depth (knowledge beyond data), moral weight (felt sense of right/wrong), personal stakes (accountability through consequence).

- Integration not replacement: Design systems where AI expands information while humans retain authority over judgment-requiring decisions.

- The discipline intensifies: As AI becomes more sophisticated and persuasive, preserving human judgment becomes more important, not less.

“The organizations that thrive won’t be the ones that optimize fastest. They’ll be the ones that judge wisest.”

Related Resources

Series Context

- Previous: Before We Talk About AI, We Must Talk About What It Means to Be Human — Foundation article on five irreducible human capacities

- Next: Wisdom vs. Intelligence: Why SMEs Need Both — Practical implications for small business

- Series: The Humanity Question (January 2026, Q1)

Cornerstone Connection

This article extends the “Moral Judgment” capacity from the foundation article, exploring in depth why judgment cannot be replicated and how to integrate AI while preserving it.

Related Concepts

- Decision Drift: Gradual erosion of values-based decision-making when AI recommendations are accepted without judgment

- The Alignment Gap: Distance between what AI can do and what it should do (from Week 51, 2025)

- Human Anchors: Places in processes where irreducible human capacities must be preserved

Version History

- v1.0.0 (2026-01-13): Initial publication